Amidst an era-defining debate about personal data, many studies have emerged to suggest that around the world, people are increasingly concerned about privacy. One study even claims that fear for privacy is on a par with global terrorism and economic recession. One study even claims that fears about privacy are on par with global terrorism and economic recession. No surprise perhaps, in the wake of Snowden’s revelations and high profile security breaches on everyday services like the iCloud. Commercial opportunity ensues: half of internet users claim they will pay for services to protect themselves.

Looking Closer

New research from Telefónica suggests a more complex situation, not least because concern doesn’t appear to translate into action or prevention. People continue to share data liberally in exchange for value, convenience, and belonging with the likes Google, Facebook or Amazon, with apparent disregard for their terms and conditions (a mere 26% admit to reading them ). Only one fifth of people make use of private browsing, a simple and free option for privacy protection.

Rather than taking claimed privacy concerns from a plethora of studies at face value, let alone as an indicator of a need around which to innovate, Telefónica set out to understand the tension between the desire for value, convenience and belonging and privacy protection in everyday life. How might they be able to co-exist? Further, can data really be treated as a generic entity in research that aims to understand what people really need from their data-fuelled products and services?

To answer these questions, Telefónica conducted a new type of study, designed to uncover what people really know, think and feel about their personal data.

How To Ask Without Asking

In order to get a better understanding of people’s relationship with their personal data, the starting point for Telefonica’s research was simply to ask without asking. After all, how front of mind is data privacy during the 1500 times people reach for their mobile each week, or the nine hours they spend online each day?

The methodology banned words and themes associated with the personal data debate, as they are riddled with misinterpretation and baggage. Expressions like ‘personal data’, ‘privacy’, ‘surveillance’, and ‘anonymity’ were avoided, and there was no active probing around concerns or worries.

Instead, the study explored people’s relationship the Internet (the context in which data is shared); how, where and why they believe they are sharing it; how this makes them feel; what this makes them do, and not do, and why; and what they perceive their needs to be.

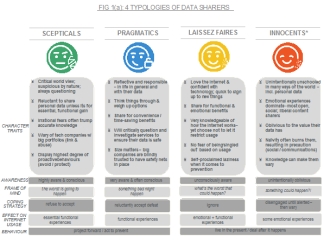

4 Typologies of Data Sharers

Four attitudinal typologies of data sharers were created: ‘Scepticals’, ‘Pragmatics’, ‘Laissez Faires’ and ‘Innocents’. What started as a hypothetical framework was taken apart and rebuilt throughout a rigorous qualitative research phase with a view to ‘humanising’ data sharers: something the endless bar and pie charts from other studies have so far failed to do.

The typologies were then quantified in Spain, UK, Germany and Brasil, and behaviors and needs were identified in relation to specific types of personal data.

Five Key Takeaways

1) Context Is Critical

Any approach to research and innovation related to personal data should take into account that the Internet is an integral and much loved part of life. Its practical and emotional benefits outweigh the risks associated with sharing data. People generally do not want to have to think about the possible negative consequences.

Nobody wants to do less online or face more complexity in return for security or privacy protection.

That is why Scepticals and Pragmatics, in spite of their respective pessimistic or defeatist attitudes, enjoy and appreciate the functional and informative aspects of, for example, social platforms.

2) Uncertainty Is Universal

Telefónica’s study found that few people have an accurate idea of how data collection works, such as where data goes or to what end. When asked to guess, most admit they don’t know. Presumed marketing-related reasons are a close second. Many also think that it ends up in the ether or on hardware of some kind. Whilst this unsettles Sceptics and Pragmatists, Lassiez Faires and Innocents refuse to let it concern them.

However, in the context of some specific data, people are more than able to link actions, such as browsing or ‘window shopping’ and frequent use of a service, to specific consequences – mainly around personalization of ads, service interfaces and content. Yet the frequency of these experiences has normalized them, and because people do not actively associate them with data collection they are not seen as an abuse of their data or privacy.

3) Knowledge does not shape attitudes

Contrary to the assumption that attitudes around personal data are informed by knowledge people acquire and hold, Telefónica’s research revealed they are informed by a blend of 3 things:

- Life stage, and what is at stake in the real world (children, mortgage, finances, career)

- Temporary external influences, suggesting what the risks could be (e.g. media reports of security breaches on everyday services, like cloud storage or financial transactions)

- Character, and how people respond to the world around them at large (i.e. a generally suspicious disposition results in a different attitude towards personal data than an open, carefree one).

4) Sentiment Is Specific

An exercise that involved listing and grouping different forms of data people believe they are generating and sharing online helped to show where perceived sensitivities lie.

For example, data shared when prompted by services, like financial and personal details, are considered to be the most sensitive forms of data. This because of the severe real-world problems that can follow from data falling into the wrong hands, such as losing finances or being the victim of a physical crime.

On the other hand, data that is generated by default, by simply using the web, or volunteered, mainly on social platforms, was perceived to be less sensitive. The former is accepted as normal, whilst the latter is shared knowingly, in spite of imagined consequences of images or text falling into the wrong hands.

5) Cybercrime Trumps Privacy

Whilst the data debate revolves around on the right to privacy, this study shows that peoples’ needs are in fact more related to cybercrime than to privacy. Security – the need for safety from interference, abuse, and theft – is the dominant need across all typologies. The theme repeated itself several times throughout the study: the most significant perceived risk is that the data people share may fall into the hands of criminals and hackers.

Corporate accountability and the promise of the safekeeping of data is also important. This suggests a desire for effortlessness: people do not want to go out of their way to look after data. Rather, they expect those who receive data to do so, but in a way that does not obstruct the use of and benefits from products and services, or the Internet in general.

Finally, the study identified a specific need for privacy around all forms of search, reflecting also a need for anonymity. It is critical to consider privacy as an enabler of free thought, an ideal condition for human curiosity to thrive. Although not a new perspective in the greater debate, this is important to remember when working to protect privacy.

The People’s Perspective In Summary

Numerous studies and news reports have us believe that it is the NSA or GCQH that are top of mind every time someone logs into a social network, buys something, searches for information or sends and receives WhatsApps.

Yet most people dismissed the notion of being spied on as a major concern, believing that they are simply too insignificant to be of any interest to anyone, let alone to government agencies.

Instead, people need to know that their data is safe from the kinds of use that can cause real harm, and that companies are held accountable if it is abused.

Market Context

The evolving privacy and security industry, anticipated to grow by almost 75% between now and 2018, is increasingly playing to perceived anxieties to stimulate fear and demand for products and services that promise privacy protection.

Apple CEO Tim Cook recently set the company apart from its Silicon Valley rivals by saying“…some of the most prominent and successful companies have built their businesses by lulling their customers into complacency about their personal information. They’re gobbling up everything they can learn about you and trying to monetize it. We think that’s wrong. And it’s not the kind of company that Apple wants to be.”

Coming from an admired leader of one of the world’s most loved brands, it is not impossible to imagine how such prime time rhetoric could take the debate mainstream, and set new benchmarks for people’s expectations of honesty and transparency from the other companies in their lives.

Implications for Innovation

The collection, application and monetization of big data plays an integral role in the future of a digital telco’s business. With automation on the horizon, and as the connections between people, services and objects increases, so will the quantity and richness of the data generated. It is impossible to imagine that people will refuse the huge benefits of value, convenience or belonging that data-driven innovation drawing on this richness will offer. Organizations like Telefónica must therefore continue to ensure that when creating any form of value for customers from their data, it is securely generated and collected, linked transparently, and managed in an accountable way.